SLOs for Internal Services — What to Track When You Don’t Have Users

Published on 12 March 2026 by Zoia Baletska

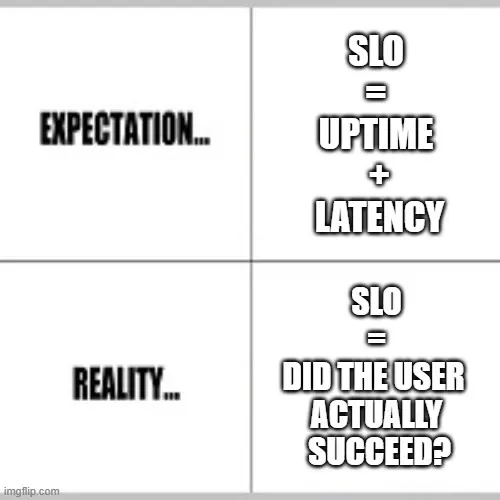

Service Level Objectives (SLOs) are a cornerstone of modern reliability engineering. They help teams understand, measure, and maintain the reliability of the services they build. For public-facing services, this is usually straightforward: uptime, latency, error rates, or user satisfaction can all serve as meaningful indicators. But what happens when your service doesn’t have external users? Internal microservices, background jobs, data pipelines, and internal business systems still power your operations — but traditional user metrics don’t exist.

This post explains how to define meaningful SLOs for internal services, what to track, and common pitfalls teams fall into.

Reliability vs. Speed: Finding the Right Balance

Even internal services have a cost when they fail or slow down. A delayed internal API can hold up dozens of dependent services, slow reporting pipelines, or impact operational processes. But unlike customer-facing services, internal services are often under pressure to be fast and flexible.

SLOs help teams quantify the trade-off between reliability and speed:

-

High reliability might mean stricter SLIs, retries, or longer processing times.

-

High speed might accept occasional minor failures to deliver results quickly.

Defining internal SLOs is about understanding your downstream dependencies. Ask: “If this service fails or slows down, who or what is affected, and how severely?”

How to Measure SLOs Without Users

Without external traffic or user-facing metrics, you need to look at the systems themselves and the teams that depend on them. Some approaches include:

-

Upstream/Downstream Dependency Tracking

- Measure request success rates between services. If your internal API fails 5% of the time, downstream services may stall.

- Track job completion rates for background processes or data pipelines. -

Time-to-Completion Metrics

- Record the latency of internal API calls, batch job completion, or data delivery pipelines.

- Compare against expected processing windows (e.g., “daily ETL completes in < 30 minutes”). -

Error and Retry Analysis

- Track failed or retried operations.

- SLOs can define acceptable thresholds for retries or failures without impacting dependent services. -

Internal Service Consumer Feedback

- Collect feedback from teams relying on the service. Even simple surveys or incident reports can quantify how reliability affects productivity. -

Capacity & Queue Monitoring

- For background jobs or message queues, SLOs can track queue length, backlog, and processing rates.

- This ensures internal consumers aren’t waiting indefinitely for data or processing results.

Common Pitfalls

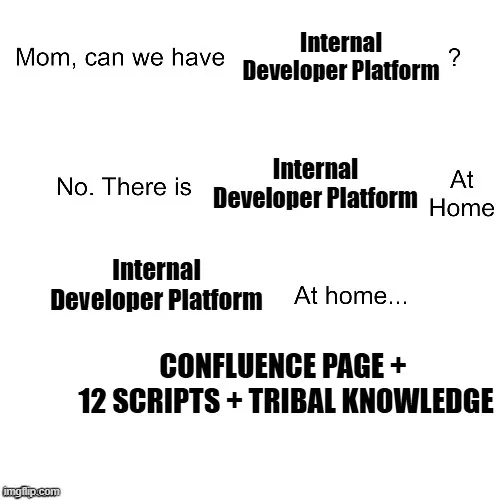

Even experienced teams sometimes misconfigure SLOs for internal services. Common mistakes include:

-

Focusing too much on uptime. Uptime is important, but if the service processes data incorrectly or is consistently slow, a “100% uptime” SLO doesn’t capture the real impact.

-

Ignoring downstream impact. Measuring only the internal service in isolation can hide cascading failures. Always consider the impact on dependent teams or systems.

-

Setting unrealistic targets. Internal services often operate under different constraints than external services. Extremely strict SLOs may force unnecessary over-engineering.

-

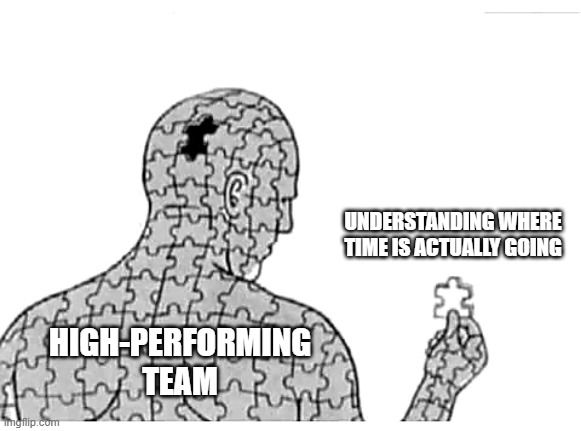

Neglecting observability. You can’t manage what you don’t measure. Instrument your services, pipelines, and jobs to collect reliable metrics from day one.

Putting It All Together

Defining SLOs for internal services requires a shift in perspective: your “users” are other teams or downstream systems, not external customers. By measuring success rates, latency, queue lengths, and error trends, and by correlating these metrics with downstream impact, you can set SLOs that truly guide operational decisions.

Start small, track what matters, and iterate. Internal SLOs are not just about avoiding failures — they’re about keeping the gears of your organisation running smoothly.

Supercharge your Software Delivery!

Implement DevOps with Agile Analytics

Implement Site Reliability with Agile Analytics

Implement Service Level Objectives with Agile Analytics

Implement DORA Metrics with Agile Analytics